6.2対象の設定

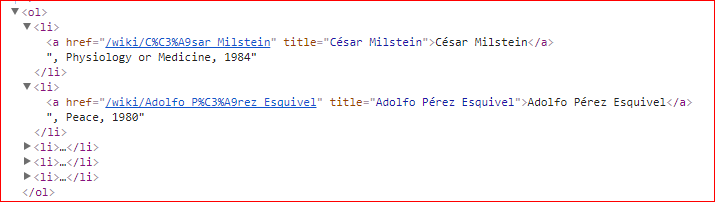

http://en.wikipedia.org/wiki/List_of_Nobel_laureates_by_country (国ごとに受賞者のリストがある)

Scrapyがinstallされていなかったので

>conda install -c https://conda.anaconda.org/anaconda scrapy

とした。scrapyだけが」installされるのだろうとおもっていたら

新規installが18、アップデートが4個入ってきた。

attrs: 15.2.0-py35_0 anaconda 不明

automat: 0.5.0-py35_0 anaconda 不明

constantly: 15.1.0-py35_0 anaconda 不明

cssselect: 1.0.1-py35_0 anaconda 不明

hyperlink: 17.1.1-py35_0 anaconda 純粋なPythonの不変URLの実装を提供します。?

incremental: 16.10.1-py35_0 anaconda 不明

parsel: 1.2.0-py35_0 anaconda 不明

patch: 2.5.9-1 anaconda 不明

pyasn1-modules: 0.0.8-py35_0 anaconda 不明

pydispatcher: 2.0.5-py35_0 anaconda 不明

queuelib: 1.4.2-py35_0 anaconda 不明

scrapy: 1.3.3-py35_0 anaconda スクレイピングツール

service_identity: 17.0.0-py35_0 anaconda サービスID検証

twisted: 17.5.0-py35_0 anaconda 不明

w3lib: 1.17.0-py35_0 anaconda 不明

zope: 1.0-py35_0 anaconda Webアプリケーションフレームワーク

zope.interface: 4.4.2-py35_0 anaconda

anaconda: 2.4.1-np110py35_0 --> custom-py35_0 anaconda

conda: 4.3.24-py35_0 --> 4.3.25-py35_0 anaconda OSに依存しない、システムレベルのバイナリパッケージと環境マネージャ。

conda-env: 2.6.0-0 --> 2.6.0-0 anaconda 不明

spyder: 3.2.0-py35_0 --> 2.3.8-py35_1 anaconda IDE

installできたので

>scrapy startproject nobel_winners

で実行すると本のとおりのフォルダー構造ができていた。

__init__.pyには何も記載なし

items.pyには

# Define here the models for your scraped items

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class NobelWinnersItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

pass

とありスクレイピングしたいitemを定義するファイル

middlewares.py

# -*- coding: utf-8 -*-

# Define here the models for your spider middleware

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/spider-middleware.html

from scrapy import signals

class NobelWinnersSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_spider_input(response, spider):

# Called for each response that goes through the spider

# middleware and into the spider.

# Should return None or raise an exception.

return None

def process_spider_output(response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response.

# Must return an iterable of Request, dict or Item objects.

for i in result:

yield i

def process_spider_exception(response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception.

# Should return either None or an iterable of Response, dict

# or Item objects.

pass

def process_start_requests(start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated.

# Must return only requests (not items).

for r in start_requests:

yield r

def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

spiderと接続を定義する?

pipelines.py

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

class NobelWinnersPipeline(object):

def process_item(self, item, spider):

return item

Item pipelineを定義するファイル

settings.py

# -*- coding: utf-8 -*-

# Scrapy settings for nobel_winners project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# http://doc.scrapy.org/en/latest/topics/settings.html

# http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

# http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'nobel_winners'

SPIDER_MODULES = ['nobel_winners.spiders']

NEWSPIDER_MODULE = 'nobel_winners.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'nobel_winners (+http://www.yourdomain.com)'

# Obey robots.txt rules

ROBOTSTXT_OBEY = True

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}

# Enable or disable spider middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'nobel_winners.middlewares.NobelWinnersSpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'nobel_winners.middlewares.MyCustomDownloaderMiddleware': 543,

#}

# Enable or disable extensions

# See http://scrapy.readthedocs.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html

#ITEM_PIPELINES = {

# 'nobel_winners.pipelines.NobelWinnersPipeline': 300,

#}

# Enable and configure the AutoThrottle extension (disabled by default)

# See http://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

プロジェクト設定ファイル

scrapy.cfg

# Automatically created by: scrapy startproject

#

# For more information about the [deploy] section see:

# https://scrapyd.readthedocs.org/en/latest/deploy.html

[settings]

default = nobel_winners.settings

[deploy]

#url = http://localhost:6800/

project = nobel_winners

これも設定ファイル

from bs4 import BeautifulSoup

import requests

def get_column_titles(table):

cols = []

for th in table.select_one('tr').select('th')[1:]:

link = th.select_one('a')

if link:

cols.append({'name':link.text,'href':link.attrs['href']})

else:

cols.append({'name':th.text,'href':None})

return cols

BASE_URL = "http://en.wikipedia.org"

HEADERS = {'User-Agent':'Mozilla/5.0'}

def get_Nobel_soup():

response = requests.get(BASE_URL + '/wiki/List_of_Nobel_laureates',headers=HEADERS)

return BeautifulSoup(response.content,"lxml")

soup = get_Nobel_soup()

table = soup.select_one('table.sortable.wikitable')

d = get_column_titles(table)

for item in d:

print(item['name']," ",item['href'])

結果 表のヘッダ部分を取り出し名前と賞の説明ページへのリンクを辞書の配列の形で帰してくれる

from bs4 import BeautifulSoup

import requests

def get_nobel_winners(table):

cols = get_column_titles(table)

winners = []

for row in table.select('tr')[1:-2]:

year = int(row.select_one('td').text)

for i,td in enumerate(row.select('td')[1:]):

for winner in td.select('a'):

href = winner.attrs['href']

if not href.startswith('#endnote'):

winners.append({'year':year,

'category':cols[i]['name'],

'name':winner.text,

'link':winner['href']})

return winners

def get_column_titles(table):

cols = []

for th in table.select_one('tr').select('th')[1:]:

link = th.select_one('a')

if link:

cols.append({'name':link.text,'href':link.attrs['href']})

else:

cols.append({'name':th.text,'href':None})

return cols

BASE_URL = "http://en.wikipedia.org"

HEADERS = {'User-Agent':'Mozilla/5.0'}

def get_Nobel_soup():

response = requests.get(BASE_URL + '/wiki/List_of_Nobel_laureates',headers=HEADERS)

return BeautifulSoup(response.content,"lxml")

soup = get_Nobel_soup()

table = soup.select_one('table.sortable.wikitable')

d = get_nobel_winners(table)

print(str(d).encode('UTF-8'))

結果 受賞者のデータ(受賞年、受賞者の名前、カテゴリ(何賞をとったのか)、アドレス)を辞書の配列形式で返してくれる

reuests_cacheはinstallされていなかった。

>pip install reuests_cache

でinstall ver 0.4.13でした

例5-3のget_winner_nationality()関数内のget_url関数がわからなかったでのパス

Tweetのアクセストークン他取得できなかったので省略

def get_Nobel_soup():

response = requests.get(BASE_URL + '/wiki/List_of_Nobel_laureates',headers=HEADERS)

return BeautifulSoup(response.content,"lxml")

BASE_URL = "http://en.wikipedia.org"

HEADERS = {'User-Agent':'Mozilla/5.0'}

get_Nobel_soup()

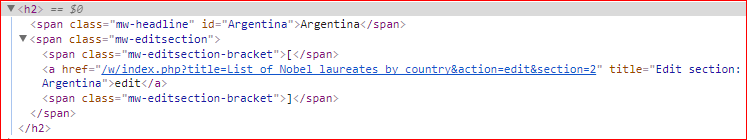

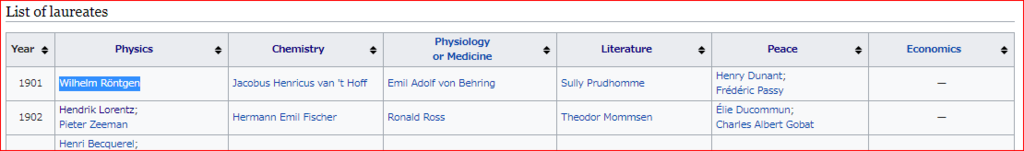

このコードが取得するのが下表である

取得したHTMLの一部

ここからテーブルの定義が始まる

b'<table class="wikitable sortable">\n

テーブルヘッダの定義

行タグ

<tr>\n

1列目のヘッダ 西暦

<th>Year</th>\n

2列目のヘッダ物理学賞の列、リンク先のアドレスも指定してある

<th width="18%"><a href="/wiki/List_of_Nobel_laureates_in_Physics" title="List of Nobel laureates in Physics">Physics</a></th>\n

3列目のヘッダ化学学賞の列、リンク先のアドレスも指定してある

<th width="16%"><a href="/wiki/List_of_Nobel_laureates_in_Chemistry" title="List of Nobel laureates in Chemistry">Chemistry</a>

</th>\n

4列目のヘッダ生理学・医学賞の列、リンク先のアドレスも指定してある

<th width="18%"><a href="/wiki/List_of_Nobel_laureates_in_Physiology_or_Medicine" title="List of Nobel laureates in Physiology or Medicine">Physiology<br/>\nor Medicine</a></th>\n

5列目のヘッダ文学賞の列、リンク先のアドレスも指定してある

<th width="16%"><a href="/wiki/List_of_Nobel_laureates_in_Literature" title="List of Nobel laureates in Literature">Literature</a></th>\n

6列目のヘッダ平和賞の列、リンク先のアドレスも指定してある

<th width="16%"><a href="/wiki/List_of_Nobel_Peace_Prize_laureates" title="List of Nobel Peace Prize laureates">Peace</a></th>\n

7列目のヘッダ経済学賞の列、リンク先のアドレスも指定してある

<th width="15%"><a class="mw-redirect" href="/wiki/List_of_Nobel_laureates_in_Economics" title="List of Nobel laureates in Economics">Economics</a></th>\n

行タグ終了

</tr>\n

ここから表の中身

行タグ

<tr>\n

表データ 1列目は西暦

<td align="center">1901</td>\n

物理学の受賞者 レントゲンのデータ

<td>

<span class="sortkey">

R\xc3\xb6ntgen, Wilhelm

</span>

受賞者の名前とその人物ページへのアドレス

<span class="vcard">

<span class="fn">

<a href="/wiki/Wilhelm_R%C3%B6ntgen" title="Wilhelm R\xc3\xb6ntgen">Wilhelm R\xc3\xb6ntgen</a>

</span>

</span>

データの終了、以降2列目から7列目までの受賞者が列記されている

</td>\n

最後にテーブルタグを閉じてあった。

</table>'

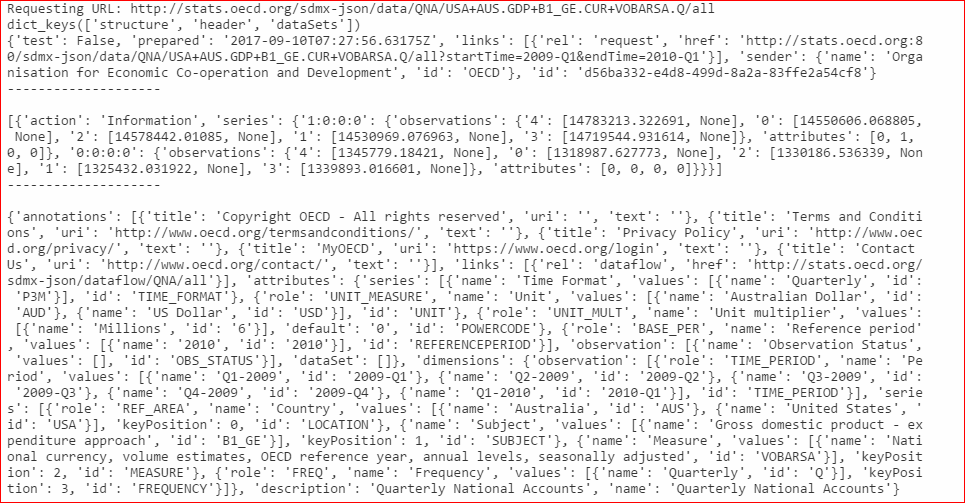

import requests

OECD_ROOT_URL = 'http://stats.oecd.org/sdmx-json/data'

def make_OECD_request(dsname,dimensions,params=None,roo_dir=OECD_ROOT_URL):

if params is None:

params = {}

dim_args = ['+'.join(d) for d in dimensions]

dim_str = '.'.join(dim_args)

url = roo_dir + '/' +dsname + '/' + dim_str + '/all'

print('Requesting URL: ' + url)

return requests.get(url,params=params)

reaponse = make_OECD_request('QNA',(('USA','AUS'),('GDP','B1_GE'),('CUR','VOBARSA'),('Q')),{'startTime':'2009-Q1','endTime':'2010-Q1'})

if reaponse.status_code == 200:

json =reaponse.json()

key = json.keys()

print(json['header'])

print('--------------------\n')

print(json['dataSets'])

print('--------------------\n')

print(json['structure']) print(json.keys())

else:

print(reaponse.status_code)

結果

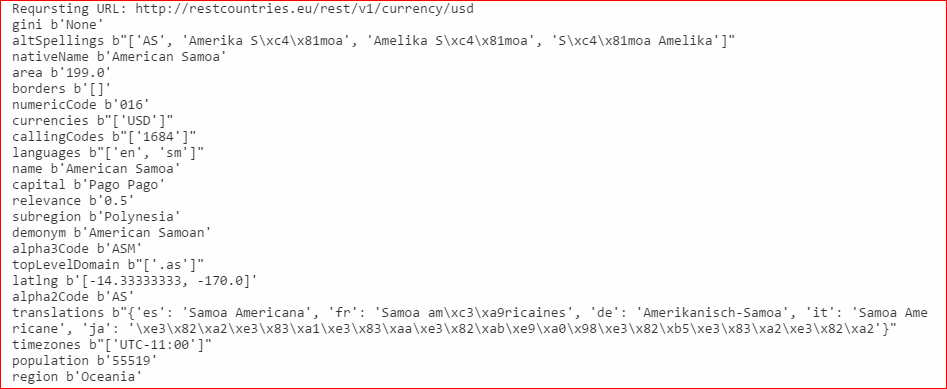

import requests

REST_EU_ROOT_URL = 'http://restcountries.eu/rest/v1'

def REST_country_request(field='all',name='',params=None):

headers = {'User-Agent':'Mozilla/5.0'}

if params is None:

params = {}

if field == 'all':

return requests.get(REST_EU_ROOT_URL + '/all')

url = '%s/%s/%s'%(REST_EU_ROOT_URL,field,name)

print('Reqursting URL: ' + url)

response = requests.get(url,params=params,headers=headers)

if not response.status_code == 200:

raise Exception('Request failed with status code ' + str(response.status_code))

return response

response = REST_country_request('currency','usd')

json = response.json()

for key,value in json[0].items():

print(key,str(value).encode('UTF-8'))

結果(日本語表記がおかしい)

import requests

response = requests.get("http://en.wikipedia.org/wiki/Nobel_Prize")

for resule in response:

print(resule)

結果

膨大なHTML Docが返されてくる

取得したページ

import requests

response = requests.get("http://en.wikipedia.org/wiki/Nobel_Prize")

print(dir(response))

結果

__attrs__

__bool__

__class__

__delattr__

__dict__

__dir__

__doc__

__eq__

__format__

__ge__

__getattribute__

__getstate__

__gt__

__hash__

__init__

__iter__

__le__

__lt__

__module__

__ne__

__new__

__nonzero__

__reduce__

__reduce_ex__

__repr__

__setattr__

__setstate__

__sizeof__

__str__

__subclasshook__

__weakref__

_content

_content_consumed

apparent_encoding

close

connection

content

cookies

elapsed

encoding

headers

history

is_permanent_redirect

is_redirect

iter_content

iter_lines

json

links

ok

raise_for_status

raw

reason

request

status_code

text

url

-

import requests

response = requests.get("http://en.wikipedia.org/wiki/Nobel_Prize")

print(response.status_code)

結果

200

import requests

response = requests.get("http://en.wikipedia.org/wiki/Nobel_Prize")

print(response.headers)

結果(一部)

import requests

response = requests.get("http://en.wikipedia.org/wiki/Nobel_Prize")

print(response.text.encode('utf-8'))

結果(量が膨大なので省略)

import requests

response = requests.get("https://chhs.data.ca.gov/api/views/pbxw-hhq8/rows.json?accessType=DOWNLOAD")

print(response.status_code)

結果

404

サイト内で"pbxw-hhq8"を検索してみると

https://data.chhs.ca.gov/dataset/food-affordability-2006-2010がそのデータらしい。データ形式はCSV,PDF,XLSが用意されていたがjsonはなかった。

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

</style>

<svg id="chart" width="300" height="225">

<circle r="15" cx="100" cy="50"></circle>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

#chart circle{fill:lightblue}

</style>

<svg id="chart" width="300" height="225">

<circle r="15" cx="100" cy="50"></circle>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightcoral;

}

#chart circle{fill:lightblue}

#chart line{stroke: #555555;stroke-width: 2}

#chart rect{stroke: red;fill: white}

#chart polygon{fill:green}

</style>

<svg id="chart" width="300" height="225">

<circle r="15" cx="100" cy="50"></circle>

<line x1="20" y1="20" x2="20" y2="130"></line>

<line x1="20" y1="130" x2="280" y2="130"></line>

<rect x="240" y="5" width="55" height="30"></rect>

<polygon points="210,100,230,100,220,80"></polygon>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightcoral;

}

#chart circle{fill:lightblue}

#chart line{stroke: #555555;stroke-width: 2}

#chart rect{stroke: red;fill: white}

#chart polygon{fill:green}

</style>

<svg id="chart" width="300" height="225">

<circle r="15" cx="100" cy="50"></circle>

<line x1="20" y1="20" x2="20" y2="130"></line>

<line x1="20" y1="130" x2="280" y2="130"></line>

<rect x="240" y="5" width="55" height="30"></rect>

<polygon points="210,100,230,100,220,80"></polygon>

<text id="title" text-anchor="middle" x="150" y="20">

A Dummy Chart

</text>

<text x="20" y="20" transform="rotate(-90,20,20)" text-anchor="end" dy="0.71em">

y axis label

</text>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightcoral;

}

#chart circle{fill:lightblue}

#chart line{stroke: #555555;stroke-width: 2}

#chart rect{stroke: red;fill: white}

#chart polygon{fill:green}

#chart path{stroke:red; fill:none}

</style>

<svg id="chart" width="300" height="225">

<circle r="15" cx="100" cy="50"></circle>

<line x1="20" y1="20" x2="20" y2="130"></line>

<line x1="20" y1="130" x2="280" y2="130"></line>

<rect x="240" y="5" width="55" height="30"></rect>

<polygon points="210,100,230,100,220,80"></polygon>

<text id="title" text-anchor="middle" x="150" y="20">

A Dummy Chart

</text>

<text x="20" y="20" transform="rotate(-90,20,20)" text-anchor="end" dy="0.71em">

y axis label

</text>

<path d="M20 130L60 70L110 100L160 45"></path>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

#chart path{stroke:red; fill:none}

</style>

<svg id="chart" width="300" height="150">

<path d="M40 40

A30 40

0 0 1

80 80

A50 50 0 0 1 160 80

A30 30 0 0 1 190 80

">

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

#chart path{stroke:red; fill:none}

</style>

<svg id="chart" width="300" height="150">

<path d="M40 80

A30 40 0 0 1 80 80

A30 40 0 0 0 120 80

A30 40 0 1 0 160 80

A30 40 0 1 1 200 80

">

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

#chart path{stroke:red; fill:none}

</style>

<svg id="chart" width="300" height="150">

<rect width="20" height="40" transform="translate(60,55)" fill="blue"/>

<rect width="20" height="40" transform="translate(120,55),rotate(45)" fill="blue"/>

<rect width="20" height="40" transform="translate(180,55),scale(0.5)" fill="blue"/>

<rect width="20" height="40" transform="translate(240,55),rotate(45),scale(0.5)" fill="blue"/>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

</style>

<svg id="chart" width="300" height="150">

<g id="shapes" transform="translate(150,75)">

<circle cx="50" cy="0" r="25" fill="red"/>

<rect x="30" y="10" width="40" height="20" fill="blue"/>

<path d="M-20 -10 L50 -10L10 60Z" fill="green"/>

<circle r="10" fill="yellow"/>

</g>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

#chart circle{opacity:0.33}

</style>

<svg id="chart" width="300" height="150">

<g transform="translate(150,75)">

<circle cx="0" cy="-20" r="30" fill="red"/>

<circle cx="17.3" cy="10" r="30" fill="green"/>

<circle cx="-17.3" cy="10" r="30" fill="blue"/>

</g>

</svg>

<body>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

結果

4.7.11 JavaScriptによるSVG

index.html

<!-- index.html -->

<!DOCTYPE html>

<meta charset="utf-8">

<style>

svg#chart{

background: lightgray;

}

#chart circle{fill:red}

</style>

<body>

<svg id="chart" >

</svg>

<script src="http://d3js.org/d3.v3.min.js"></script>

<script src="script.js"></script>

</body>

script.js

var chartCircles = function(data){

var chart = d3.select('#chart');

chart.attr('height',data.height).attr('width',data.width);

chart.selectAll('circle').data(data.circle)

.enter()

.append('circle')

.attr('cx',function(d){return d.x})

.attr('cy',function(d){return d.y})

.attr('r',function(d){return d.r});

};

var data ={

width:300,height:150,

circle:[

{'x':50,'y':30,'r':20},

{'x':70,'y':80,'r':10},

{'x':160,'y':60,'r':10},

{'x':200,'y':100,'r':5}

]

};

chartCircles(data);

結果